Key Takeaways from Multispeaker Reflections on AI, Society, and Human Values

Joichi Ito: Rethinking Progress through Eastern Perspectives

A Narrative of Coexistence, Not Control

Western culture, shaped by monotheistic hierarchies, tends to see technology as subordinate tools: God at the top, humans in the middle, and technology below—once slaves, now machines. In contrast, Eastern traditions, especially in Japan, present a worldview where humans and tools coexist. Technology is not something to dominate, but something to live with—integrated and respected.

Capitalism, inherited from the West, predicates itself on constant growth: no expansion equals death. But Japan demonstrates a cultural capacity to maintain purpose and dignity even in economic stagnation. The centuries-long cyclical rebuilding of Ise Jingu Shrine and the dedication of craftspeople who preserve their trades without scaling or growth illustrates a different kind of meaningful continuity.

The Concept of Pure Experience

経験するというのは事実其儘に知るの意である…たとえば、色を見、音を聞く刹那、未だこれが外物の作用であるとか、我がこれを感じているとかいうような考のないのみならず、この色、この音は何であるという判断すら加わらない前をいうのである。それで純粋経験は直接経験と同一である。自己の意識状態を直下に経験した時、未だ主もなく客もない、知識とその対象とが全く合一している。これが経験の最醇なる者である。 西田幾多郎 『善の研究』(1911年)ch.1 「純粋経験」

Kitarō Nishida’s concept of 純粋経験 (pure experience) highlights a state of unmediated perception—before subject-object division or judgment arises. At the moment of seeing a color or hearing a sound, there is neither “me” who perceives nor “thing” being perceived—just an undivided experience. This challenges the reductionist view of humans as merely information processors.

If you believe you’re merely receiving information in a simulated world, then you might believe AI can replace humans. But if you believe you experience the world—uniquely and bodily—then human subjectivity, values, and the irreducibility of experience cannot be replaced by AI. Pure experience resists simulation.

Lessons from the Tea Ceremony

一期一会 和敬清寂 Harmony-Humility-Purity-Tranquility

Sen no Rikyū’s legacy teaches subtle but radical transformation. In his fifties, he adopted bamboo—once seen as a humble, everyday material—as a flower vase in the formal tea ceremony, where porcelain and bronze had been the standard. His gesture redefined aesthetic value and social norms. The shift was so resonant that it felt immediately natural—“Oh, of course.”

True transformation happens when one reads the atmosphere, understands the unspoken systems at play, and then introduces a change that feels both surprising and inevitable. Rikyū’s actions are a lesson in timing, presence, and cultural fluency.

What Kaizen Teaches about Presence

Toyota’s philosophy of Kaizen is not simply optimization—it’s the removal of waste (無駄), overburden (無理), and unevenness (斑). This is done not just by following top-down orders but through continuous observation on the factory floor. Managers physically immerse themselves in the process, watching everyone’s movements, identifying waste or inefficiencies, understanding how it works at this factory. When you actually spend an entire day at each site, there are things that you only notice for the first time, things that emerge from the sense of presence you experience there.

Top down and bottom up coexist. Human experience on-site is as important as the top-down action list to achieve the best operation in world.

This kind of situated knowledge cannot be abstracted. It’s experiential and context-specific. AI, therefore, should learn from this model: not aim for abstract optimization, but support reduction of real-world friction through situated presence and human judgment.

AI Should Serve Human Values, Not Replace Them

Just like in Toyota’s model, AI must be bounded by human values on one side and human presence on the other. It should not aim for total optimization. Instead, it should help remove stress and waste while remaining embedded in human environments and cultures. AI lacks access to values and lived experience—it must be constrained and grounded by them.

+---------------------+

| OUR VALUES |

+---------------------+

↓

+---------------------+

| AI OPERATION |

+---------------------+

↑

+-----------------------------+

| HUMAN EXPERIENCE ON-SITE |

+-----------------------------+

Venerable Tenzin Priyadarshi Rinpoche: Ethical Grounding Beyond Techno-Utopianism

Empire Builders vs. Universe Builders

Quoting George Bernard Shaw:

“The reasonable man adapts himself to the world; the unreasonable one persists in trying to adapt the world to himself. Therefore all progress depends on the unreasonable man.”

— Man and Superman (1903) Goodreads

Rinpoche distinguishes between those building empires—focused on control and domination—and those seeking to build meaningful, harmonious relationships within the universe.

From Valid Questions to Relevant Questions

Citing Jeremy Bentham’s insight—“The question is not, can they reason? nor can they talk? but, can they suffer?”—Rinpoche argues that mimicking emotion is not the same as experiencing it. True understanding of human life requires grappling with suffering. Self-awareness in machines remains reductionist; it lacks the complexity of purposefulness rooted in human vulnerability.

Moving Beyond the Myth of Democratized AI

The early Internet was fueled by techno-optimism and dreams of a democratic information commons. Thirty years later, reality looks different. Infrastructure is monopolized.

So the game of AI development is already skewed.

AI research is dominated by seven companies. Startups aspire not to democratize, but to be acquired. Democratization under the current economic framework is a not working.

Ethical Framing vs. Compliance

We often mistake legal compliance for ethical behavior. True civic values—trust, compassion, kindness, fearlessness, respect, dignity—are instilled imperfectly, and we lack robust frameworks to teach ethics meaningfully. What is ethical may not be legal, and vice versa. We need frameworks that allow us to be better, not just compliant.

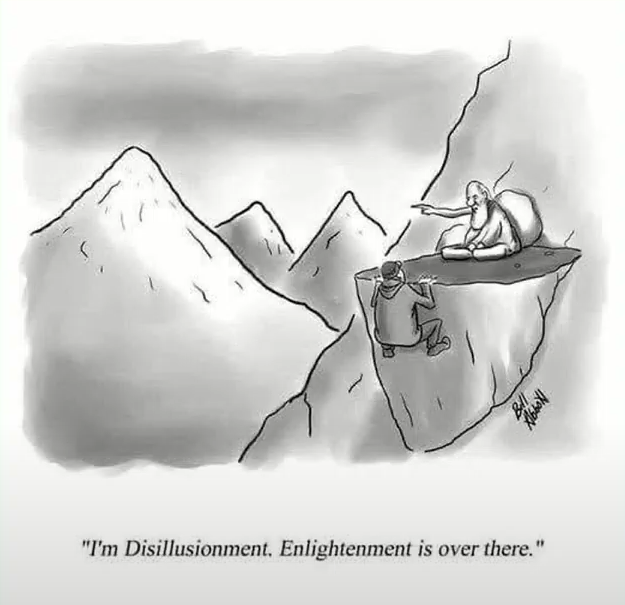

Ignorance and the Echo Chamber of Expertise

Experts are trained in narrow domains. Policymakers and engineers often exist in epistemic bubbles. Expertise is not omniscience, and we must start by acknowledging the limitations of knowledge. Only through disillusionment can we reach meaningful clarity.

![[disillusionment_tenzin-priyadarshi.png]]

![[disillusionment_tenzin-priyadarshi.png]]

Toward a Narrative We Can Live With

Muriel Rukeyser wrote, “The universe is made of stories, not atoms.” Without meaningful narratives, we risk building tools we can’t integrate into our lives. The challenge is not just technical but philosophical: can we relate to AI in ways that deepen rather than flatten our experience?

If this question is not answered, we become no more than couch potatoes looking at the world from the outside.

![[couching_sipress_tenzin priyadarshi.png]]

Robert Bench (MIT Media Lab): The Incentive Problem in the AI Age

Incentives Drive Outcomes

Why do we act—consciously or subconsciously? Over the past 30 years, the architecture of the Internet has shifted from open protocols to ad-driven ecosystems. Subscription models failed. HTTP 402 was designed for micropayments but never realized. Today, Google and Meta are advertising companies, not information companies.

You don’t use the machine. The machine uses your time.

As Charlie Munger said, “Show me the incentive, and I will show you the outcome.”

![[attention economy_robert bench.png]]

The Erosion of Attention

The “attention economy” used to mean likes and clicks. But in 2017, “Attention is All You Need” redefined it. Now AI agents (from OpenAI, Anthropic, etc.) will mediate most interactions. Soon, your assistant—not you—will decide what you see. The new interface is not a screen—it’s an agent.

If agents control attention but can’t set their own incentives, advertisers will target them. Ads will continue, now optimized not for you, but for your agent. A new layer of mediation, potentially more efficient—and more invasive.

![[structure agent_robert bench.png]]

Stablecoins and the Future of Internet Incentives

![[structure stablecoin_robert bench.png]]

Most websites stay “free” by selling our attention to advertisers, because charging tiny amounts directly for each page view isn’t practical: ordinary payment networks are too slow and their fees swallow pennies. Stablecoins—digital tokens whose value stays pegged to real money—look like a solution because, in theory, they can move funds worldwide in seconds for a fraction of a cent, letting a site bill you two‑tenths of a cent per article instead of showing ads. The problem is that today’s blockchains still confirm only a few hundred—or at best a few thousand—transactions per second (Visa handles tens of thousands), and their fees spike when demand surges. Until a stablecoin network can process vast numbers of payments almost instantly, at near‑zero cost and without gatekeeping intermediaries, advertising remains the cheaper, simpler way for platforms to earn money.

Stablecoins offer a tantalizing alternative: a way to transact without ads. But current networks are too slow and costly to compete. To replace ad revenue, stablecoins must process microtransactions at internet scale—low cost, high speed, no intermediaries.

Bench proposes a protocol-level solution akin to TCP/IP: a system built for millions of fast, small transactions that can underwrite an alternative value system on the web.

We must literally buy back our time.

Eric Lagier: Nordic Perspectives on AI Culture

Education shapes not only knowledge but disposition. In some cultures, people are taught to be shepherds—nurturing, responsible. In others, they are trained as wolves—maximizing extraction until they’re caught. The Nordic model is the former (it seemed Eric was aspiring to the latter, Americanization).

Small countries like Denmark are forced to think globally from the start. They don’t chase features—they define categories. Strategic design from day one, grounded in values and long-term thinking, offers a template for ethical innovation.

Lovable: The Fastest Growing AI App Builder

Lovable is a Swedish AI start-up that exploded onto the scene in late 2024. Founded by Anton Osika and a team of former Spotify and Klarna engineers, Lovable enables anyone—even non-coders—to build full-stack web and mobile apps simply by chatting with an AI assistant.

Lovable recently launched a major new product: “Agent Mode.” This feature empowers AI to edit entire codebases, call APIs, refactor logic, and generate interfaces with minimal user input. The platform isn’t just no-code—it’s agentic software engineering at scale, and emblematic of the shift toward AI as co-developer.

Ujjwal Deep Dahal: Bhutan’s Path to Sovereign Tech

Ujjwal Deep Dahal is the CEO of Druk Holding and Investments (DHI), Bhutan’s sovereign investment and innovation authority. With a background in electrical engineering and energy policy (MIT SPURS Fellow, 2018), Dahal has led Bhutan’s efforts to build a sovereign, sustainable tech ecosystem that aligns with the country’s Gross National Happiness philosophy.

Under his leadership, Bhutan has launched “Bhutanverse” in partnership with Sandbox, to showcase Bhutanese culture in the metaverse and train locals in Web3 creative skills. DHI has also partnered with Bitdeer to establish carbon-neutral cryptocurrency mining, leveraging Bhutan’s abundant hydropower. Meanwhile, a national rollout of 5G and blockchain-based digital identity systems aims to lay the groundwork for AI-driven public services.

However, Dahal emphasizes that infrastructure alone is not enough. Bhutan still lacks autonomous research capacity and depends on international collaboration to build talent and open innovation pipelines. He continues to advocate for global partnerships rooted in sustainability and shared knowledge—rather than short-term extractive capital.

After thoughts

Joi and Tenzin’s ideas are very inspirational, in the sense that, the whole scientific hype on AI should be wrapped in a philosophical soundness that ensures it’s aligned to our experiential world. Context making is as important as tool making.

There is a reason why PhD is Doctor of Philosophy, no matter the domain. I would seriously expect somebody to hold a PhD in AI, to be able to ponder and answer some of the philosophical concerns. Not only for yourself, but for other majority of people, who are outside of researching facilities, outside of resourceful labs, outside of Silicon Valley, outside of developed countries, outside of the privileges you take for granted.

I was angry hearing an AI scientist saying “it’s too philosophical for me to answer.” There is such a division of audiences between engineering oriented conferences that are excited and optimistic about the race of reaching the superintelligence; and more philosophically oriented conferences that are confused and pessimistic yet lacking grounded operational knowledge and guidelines to help us moving forward.

Putting the anger aside, I’m interested in bridging the gap between science and philosophy. I think design and art are playing some roles in bridging the gap. As well, how stablecoins can enter the scene in shaping the future economy and context is an eye-opener.

Another point that made me unease was the apparent weak positioning Bhutan has taken. The country wants a place in the AI landscape, but it lacks autonomous, active research. Even if it has some ideological attractor, which is already a resource unreachable by many under-developed, exploited nations, the speech sounded like a passive begging for collaboration, asking global engineers to come. I don’t think it will work, and I think many other countries will do even worse as the global power accumulates. I’m interested in looking at how Bhutan’s national policy turns our in the future, especially in the local community.

Related notes

- Digital Garage

- Stewart Brand: the Whole Earth Catalog

- Reid Hoffman: PayPal and LinkedIn

- Lawrence Lessig: Creative Commons

- Jimmy Wales: Wikipedia